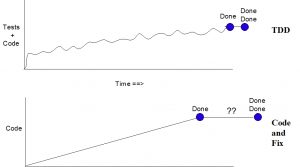

This graph, intended to show the differences in outcome between TDD and not, is a sketch of my observations combined with information extrapolated from other people’s anecdotes. One datapoint is backed by third party research: Studies show that it takes about 15% longer to produce an initial solution using TDD. Hence I show in the graph the increased amount of time under TDD to get to “done.”

The tradeoff mentioned in the title is that TDD takes a little longer to get to “done” than code ‘n’ fix. It requires incremental creation of code that is sometimes replaced with incrementally better solutions, a process that often results in a smaller overall amount of code.

When doing TDD, the time spent to go from “done” to “done done” is minimal. When doing code ‘n’ fix, this time is an unknown. If you’re a perfect coder, it is zero time! With some sadness, I must report that I’ve never encountered any perfect coders, and I know that I’m not one. Instead, my experience has shown that it almost always takes longer for the code ‘n’ fixers to get to “done done” than what they optimistically predict.

You’ll note that I’ve depicted the overall amount of code in both graphs to be about the same. In a couple cases now, I’ve seen a team take a TDD mentality, apply legacy test-after techniques, and subsequently refactor a moderate-sized system. In both cases they drove the amount of production code down to less than half the original size, while at the same time regularly adding functionality. But test code is usually as large, if not a bit larger, than the production code. In both of these “legacy salvage” cases, the total amount of code ended up being more or less a wash. Of course, TDD provides a large bonus–it produces tests that verify, document, and allow further refactoring.

Again, this graph is just a rough representation of what I’ve observed. A research study might be useful for those people who insist on them.

Comments

Anonymous May 8, 2010 01:04:00 AM

Sorry, Jeff, but I don’t believe it and cannot find any corroboration for your contention that the time from done to done-done is “minimal.” The time from done to done-done is shorter with TDD, but only on trivial projects is it as short as you indicate. On large projects, functional tests—not generally a part of TDD—often reveal important gaps and other problems that require more than a sprint’s worth of time to fix.

Jeff Langr May 10, 2010 07:35:00 AM

Good thought. The projects I’ve been on where this time was so short were where the iterations were also short–not 30 day sprints, but one and sometimes two-week iterations.

This isn’t the norm (most places require all that test-after time you speak of to run more robust tests), but these were places that were using a heavy amount of automated tests to verify things. Coupled with very short iterations, this allowed the teams to release software with high confidence, knowing that the things they would find (likely to be low severity) in production could quickly be rectified. Rare but it does exist.

The key point is that when you do get to the test-after period (i.e. the time between done and done done) after having done TDD, you do find far fewer problems to have to fix. In an environment where you have not been doing TDD, the defects that arise can easily cost many times more than in a TDD environment.

Maybe “minimal” is not a good word. Substitute “reduced.”

Anonymous February 6, 2011 12:35:00 AM

I think you’re comparing a project that uses TDD to a project that writes no unit tests until, “Done,” right?

Why are you ignoring the projects that write unit tests after each unit is written?

This is faster than both the approaches you describe. It’s faster than TDD because you write far fewer tests that you don’t need and faster than your other (waterfall?) approach because you find problems sooner.

/Shmoo.

chaussures converse June 15, 2011 03:48:00 AM

In both of these “legacy salvage” cases, the total amount of code ended up being more or less a wash. Of course, TDD provides a large bonus–it produces tests that verify, document, and allow further refactoring.